RTSP协议-粗略版

毕业时用RTSP配合H264写了个简单的直播App,主要实现逻辑是用ffmpeg相关组件去播放和控制,核心是编解码和音视频的同步。这些是全应用层的东西,最近研究大华和海康威视的摄像头时,发现有写fuzzer的必要,那就必须把这协议的细节摸清楚,所以本文对此做个记录 : )

Url

rtsp_url = (“rtsp:”| “rtspu:”) “//” host [“:”port”] /[abs_path]/content_name

Such as : rtsp://192.168.1.13:554/cam/realmonitor?channel=1&subtype=0

If we wanna use vlc or other stream players play it, we need set its username and password in the url.

So it will be : rtsp://admin:password@192.168.1.13:554/cam/realmonitor?channel=1&subtype=0

Methods

OPTIONS,DESCRIBE,SETUP,PLAY,GET_PARAMETER,TEARDOWN

Note:

“Clients” in this blog means stream players

“Server” in this blog means webcameras

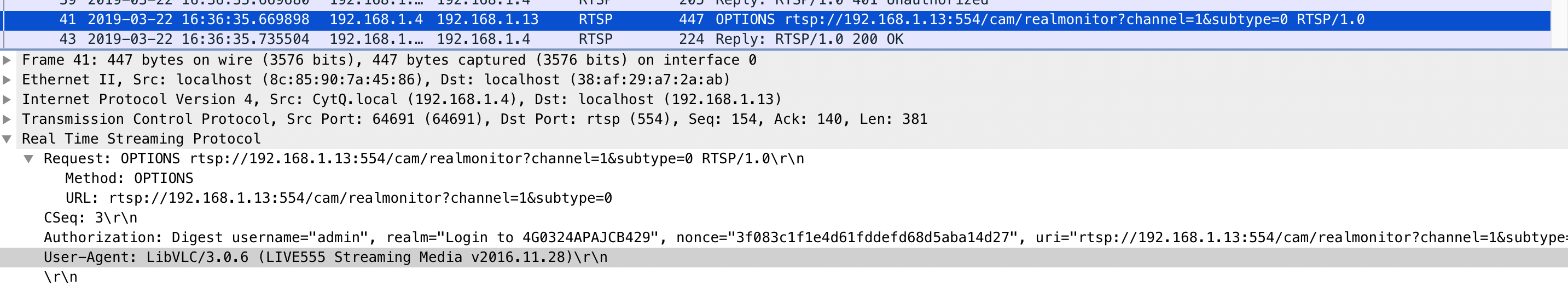

OPTIONS

- Client sends it to server

- Server will return its methods.

E.G:

Request:

As we see, Authorization in HEADER contains username\password and other necessary information. 4G0324APAJCB429 in realm is the camera serial number and it’s the unique identification for every IOT hardware.

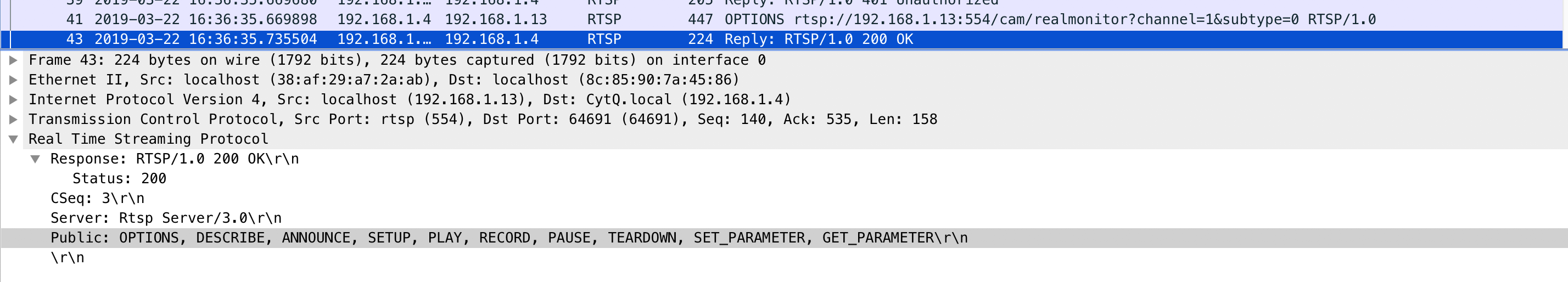

Response:

In the response, we can Public in HEADER . It contains all methods which supported by Server.

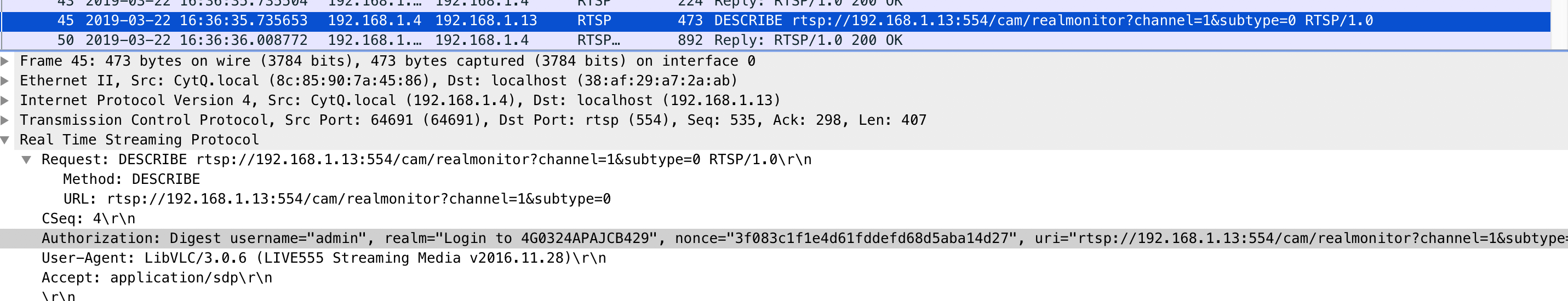

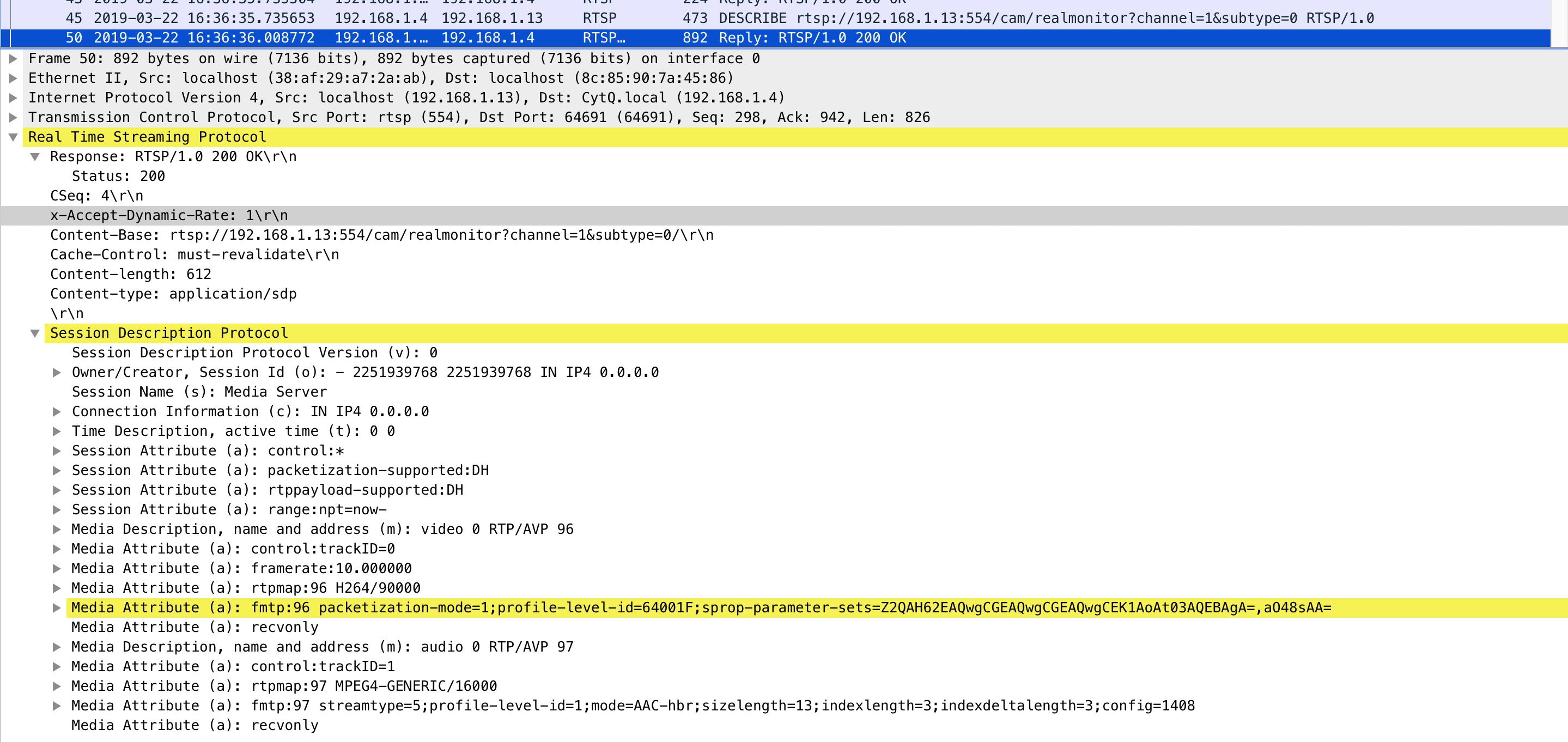

DESCRIBE

- Client sends it to get specific media information.

Accept in HEADER will show what client want. In this request, it’s sdp (sdp is a protocol, I will introduce it in next blog)

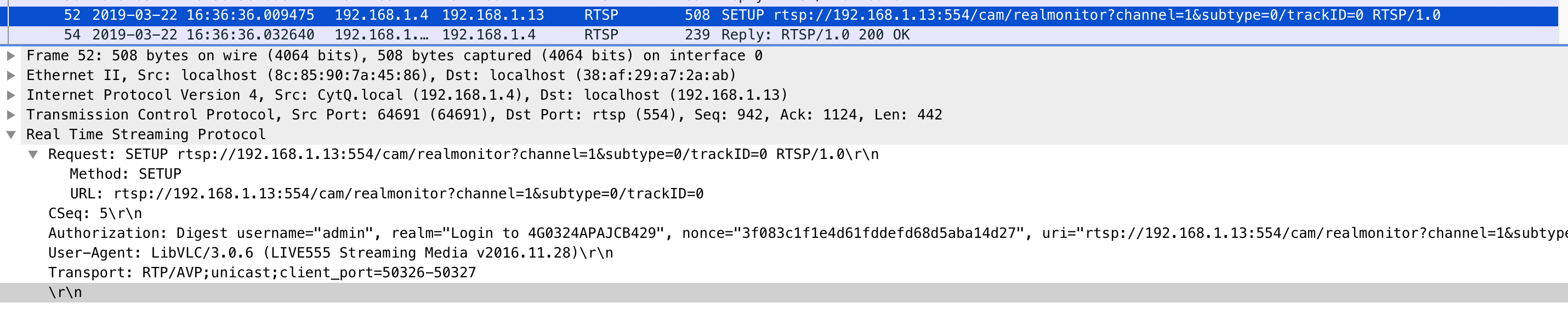

SETUP

This method has two steps.

The first step:

- Client will confirm how to transport and send this contract to server. Look at the “Transport” in HEADER.

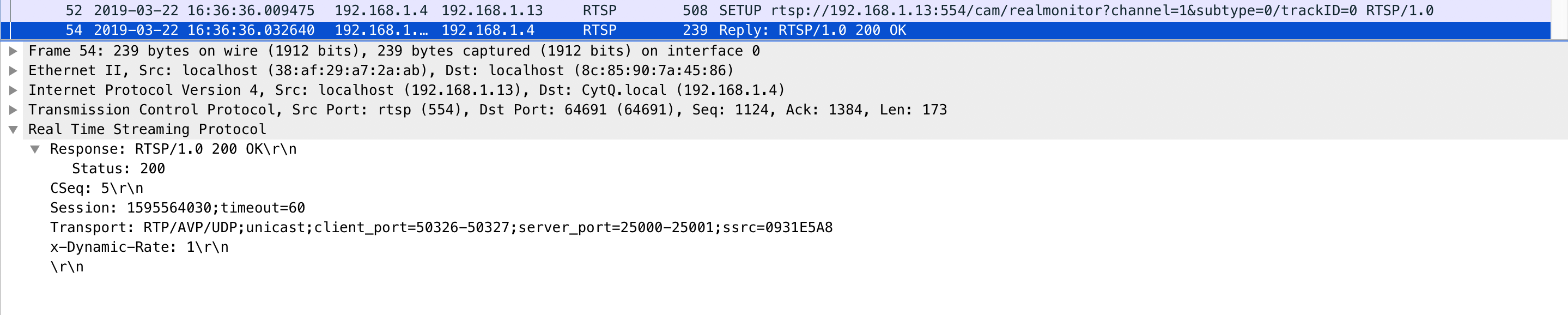

- If server agrees with that, it will generate a sessionID and returns it to the client.

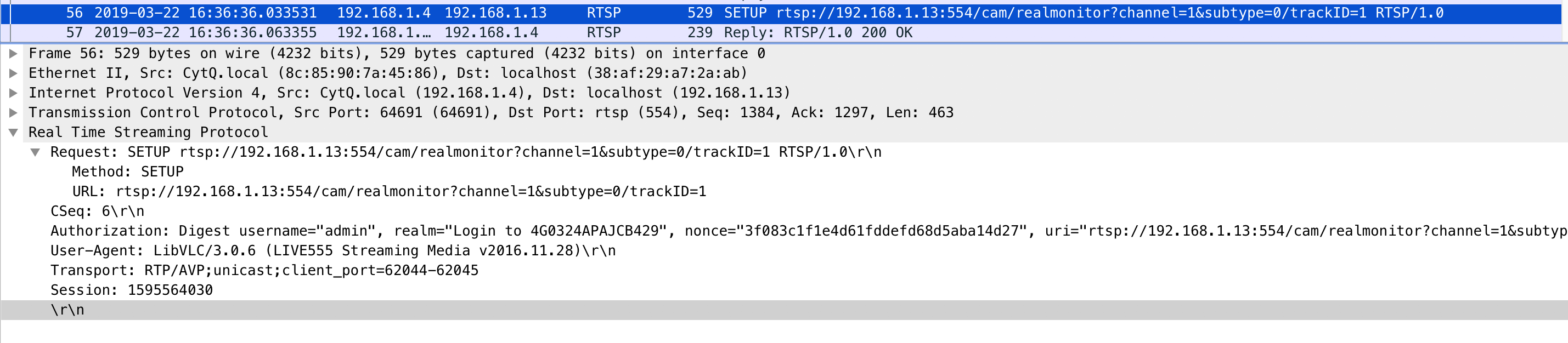

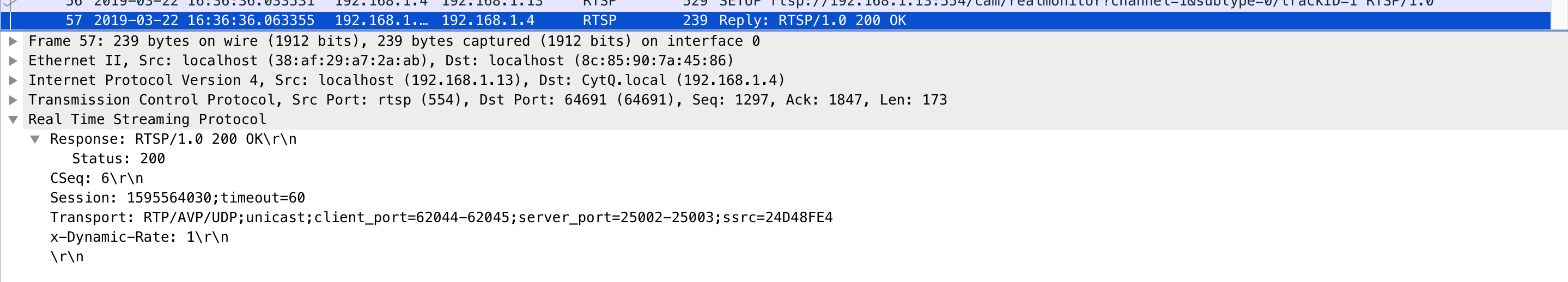

The second step:

- Client sends a new request with sessionID to establish the session

- Client will start the play process until server confirms it.

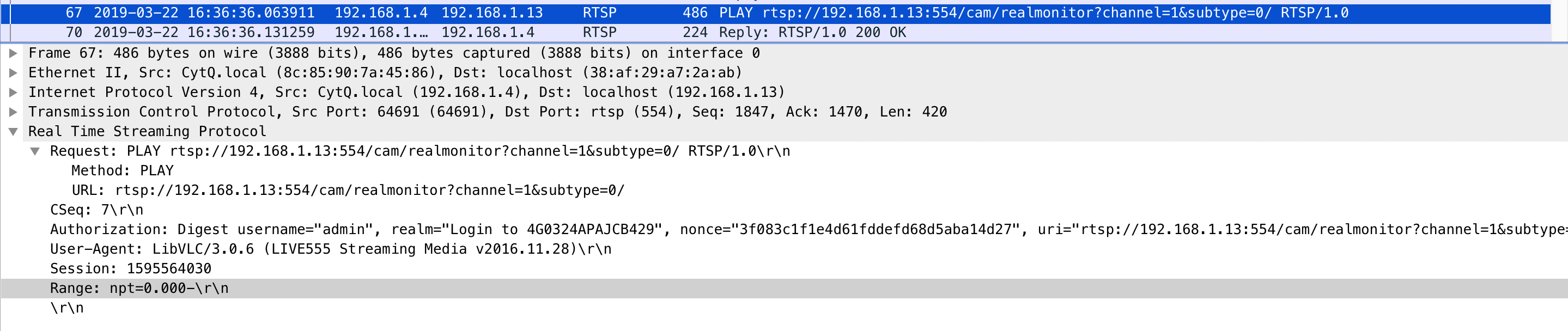

PLAY

npt in range is short for Normal Play Time. If we set it to 0, it will play now. If it’s 5, it will play until 5 seconds later.

When server get the play request, server will put it into a queue and play them one by one.

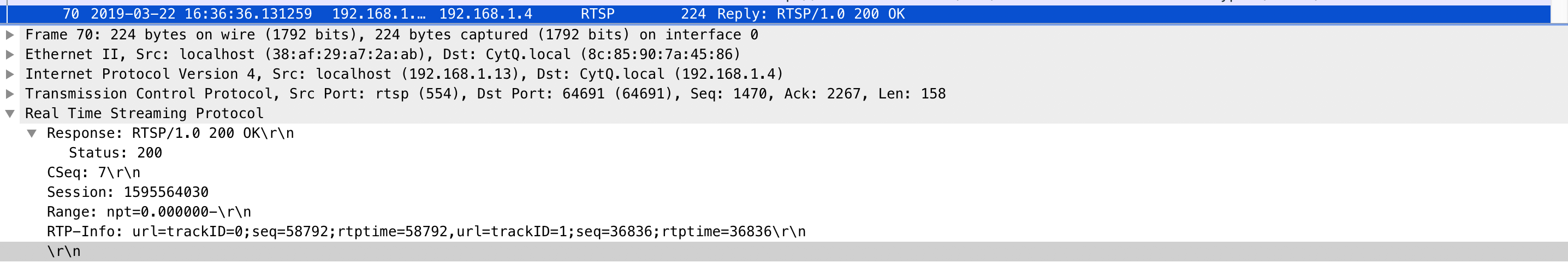

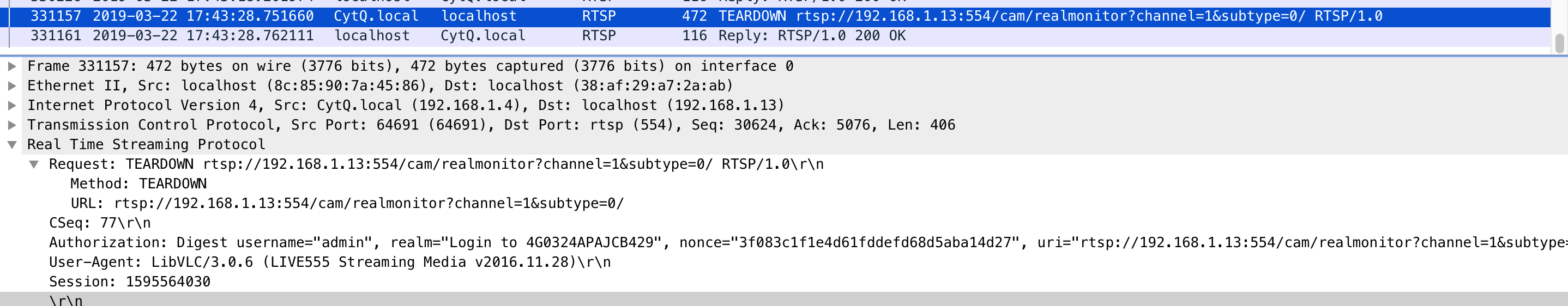

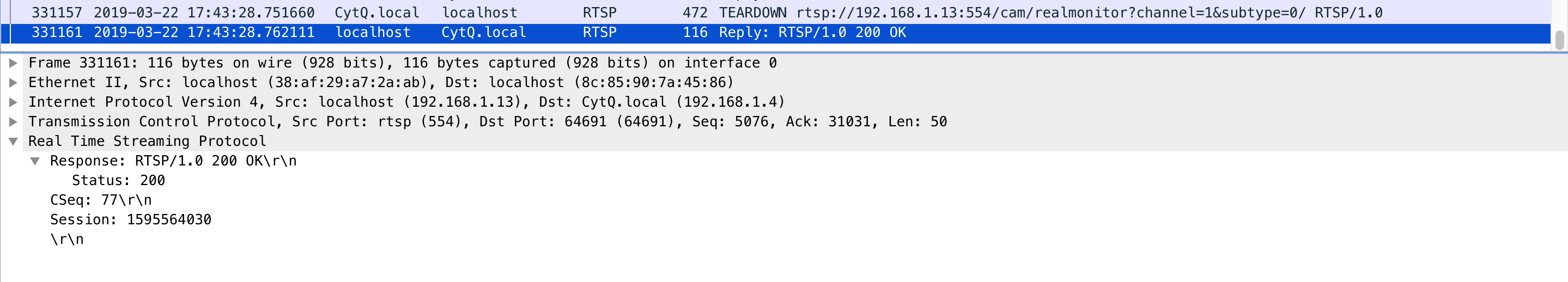

TEARDOWN

Terminate this session.

Resources

RTSP重要方法:https://blog.csdn.net/qingkongyeyue/article/details/76735528

RTSP协议分析:https://blog.csdn.net/caoshangpa/article/details/53191630